Natural Language Processing for Icelandic with PyPy: A Case Study

Natural Language Processing for Icelandic with PyPy: A Case Study

Icelandic is one of the smallest languages of the world, with about 370.000 speakers. It is a language in the Germanic family, most similar to Norwegian, Danish and Swedish, but closer to the original Old Norse spoken throughout Scandinavia until about the 14th century CE.

As with other small languages, there are worries that the language may not survive in a digital world, where all kinds of fancy applications are developed first - and perhaps only - for the major languages. Voice assistants, chatbots, spelling and grammar checking utilities, machine translation, etc., are increasingly becoming staples of our personal and professional lives, but if they don’t exist for Icelandic, Icelanders will gravitate towards English or other languages where such tools are readily available.

Iceland is a technology-savvy country, with world-leading adoption rates of the Internet, PCs and smart devices, and a thriving software industry. So the government figured that it would be worthwhile to fund a 5-year plan to build natural language processing (NLP) resources and other infrastructure for the Icelandic language. The project focuses on collecting data and developing open source software for a range of core applications, such as tokenization, vocabulary lookup, n-gram statistics, part-of-speech tagging, named entity recognition, spelling and grammar checking, neural language models and speech processing.

My name is Vilhjálmur Þorsteinsson, and I’m the founder and CEO of a software startup Miðeind in Reykjavík, Iceland, that employs 10 software engineers and linguists and focuses on NLP and AI for the Icelandic language. The company participates in the government’s language technology program, and has contributed significantly to the program’s core tools (e.g., a tokenizer and a parser), spelling and grammar checking modules, and a neural machine translation stack.

When it came to a choice of programming languages and development tools for the government program, the requirements were for a major, well supported, vendor-and-OS-agnostic FOSS platform with a large and diverse community, including in the NLP space. The decision to select Python as a foundational language for the project was a relatively easy one. That said, there was a bit of trepidation around the well known fact that CPython can be slow for inner-core tasks, such as tokenization and parsing, that can see heavy workloads in production.

I first became aware of PyPy in early 2016 when I was developing a crossword game Netskrafl in Python 2.7 for Google App Engine. I had a utility program that compressed a dictionary into a Directed Acyclic Word Graph and was taking 160 seconds to run on CPython 2.7, so I tried PyPy and to my amazement saw a 4x speedup (down to 38 seconds), with literally no effort besides downloading the PyPy runtime.

This led me to select PyPy as the default Python interpreter for my company’s Python development efforts as well as for our production websites and API servers, a role in which it remains to this day. We have followed PyPy’s upgrades along the way, being just about to migrate our minimally required language version from 3.6 to 3.7.

In NLP, speed and memory requirements can be quite important for software usability. On the other hand, NLP logic and algorithms are often complex and challenging to program, so programmer productivity and code clarity are also critical success factors. A pragmatic approach balances these factors, avoids premature optimization and seeks a careful compromise between maximal run-time efficiency and minimal programming and maintenance effort.

Turning to our use cases, our Icelandic text tokenizer "Tokenizer" is fairly light, runs tight loops and performs a large number of small, repetitive operations. It runs very well on PyPy’s JIT and has not required further optimization.

Our Icelandic parser Greynir (known on PyPI as reynir) is, if I may say so myself, a piece of work. It parses natural language text according to a hand-written context-free grammar, using an Earley-type algorithm as enhanced by Scott and Johnstone. The CFG contains almost 7,000 nonterminals and 6,000 terminals, and the parser handles ambiguity as well as left, right and middle recursion. It returns a packed parse forest for each input sentence, which is then pruned by a scoring heuristic down to a single best result tree.

This parser was originally coded in pure Python and turned out to be unusably slow when run on CPython - but usable on PyPy, where it was 3-4x faster. However, when we started applying it to heavier production workloads, it became apparent that it needed to be faster still. We then proceeded to convert the innermost Earley parsing loop from Python to tight C++ and to call it from PyPy via CFFI, with callbacks for token-terminal matching functions (“business logic”) that remained on the Python side. This made the parser much faster (on the order of 100x faster than the original on CPython) and quick enough for our production use cases. Even after moving much of the heavy processing to C++ and using CFFI, PyPy still gives a significant speed boost over CPython.

Connecting C++ code with PyPy proved to be quite painless using CFFI, although we had to figure out a few magic incantations in our build module to make it compile smoothly during setup from source on Windows and MacOS in addition to Linux. Of course, we build binary PyPy and CPython wheels for the most common targets so most users don’t have to worry about setup requirements.

With the positive experience from the parser project, we proceeded to take a similar approach for two other core NLP packages: our compressed vocabulary package BinPackage (known on PyPI as islenska) and our trigrams database package Icegrams. These packages both take large text input (3.1 million word forms with inflection data in the vocabulary case; 100 million tokens in the trigrams case) and compress it into packed binary structures. These structures are then memory-mapped at run-time using mmap and queried via Python functions with a lookup time in the microseconds range. The low-level data structure navigation is done in C++, called from Python via CFFI. The ex-ante preparation, packing, bit-fiddling and data structure generation is fast enough with PyPy, so we haven’t seen a need to optimize that part further.

To showcase our tools, we host public (and open source) websites such as greynir.is for our parsing, named entity recognition and query stack and yfirlestur.is for our spell and grammar checking stack. The server code on these sites is all Python running on PyPy using Flask, wrapped in gunicorn and hosted on nginx. The underlying database is PostgreSQL accessed via SQLAlchemy and psycopg2cffi. This setup has served us well for 6 years and counting, being fast, reliable and having helpful and supporting communities.

As can be inferred from the above, we are avid fans of PyPy and commensurately thankful for the great work by the PyPy team over the years. PyPy has enabled us to use Python for a larger part of our toolset than CPython alone would have supported, and its smooth integration with C/C++ through CFFI has helped us attain a better tradeoff between performance and programmer productivity in our projects. We wish for PyPy a great and bright future and also look forward to exciting related developments on the horizon, such as HPy.

Error Message Style Guides of Various Languages

Error Message Style Guides of Various Languages

PyPy has been trying to produce good SyntaxErrors and other errors for a long time. CPython has also made an enormous push to improve its SyntaxErrors in the last few releases. These improvements are great, but the process feels somewhat arbitrary sometimes. To see what other languages are doing, I asked people on Twitter whether they know of error message style guides for other programming languages.

Wonderfully, people answered me with lots of helpful links (full list at the end of the post), thank you everybody! All those sources are very interesting and contain many great points, I recommend reading them directly! In this post, I'll try to summarize some common themes or topics that I thought were particularly interesting.

Language Use

Almost all guides stress the need for plain and simple English, as well as conciseness and clarity [Flix, Racket, Rust, Flow]. Flow suggests to put coding effort into making the grammar correct, for example in the case of plurals or to distinguish between "a" and "an".

The suggested tone should be friendly and neutral, the messages should not blame the Programmer [Flow]. Rust and Flix suggest to not use the term 'illegal' and use something like 'invalid' instead.

Flow suggests to avoid "compiler speak". For example terms like 'token' and 'identifier' should be avoided and terms that are more familiar to programmers be used (eg "name" is better). The Racket guide goes further and has a list of allowed technical terms and some prohibited terms.

Structure

Several guides (such as Flix and Flow) point out a 80/20 rule: 80% of the times an error message is read, the developer knows that message well and knows exactly what to do. For this use case it's important that the message is short. On the other hand, 20% of the times this same message will have to be understood by a developer who has never seen it before and is confused, and so the message needs to contain enough information to allow them to find out what is going on. So the error message needs to strike a balance between brevity and clarity.

The Racket guide proposes to use the following general structure for errors: 'State the constraint that was violated ("expected a"), followed by what was found instead.'

The Rust guides says to avoid "Did you mean?" and questions in general, and wants the compiler to instead be explicit about why something was suggested. The example the Rust guide gives is: 'Compare "did you mean: Foo" vs. "there is a struct with a similar name: Foo".' Racket goes further and forbids suggestions altogether because "Students will follow well‐meaning‐but‐wrong advice uncritically, if only because they have no reason to doubt the authoritative voice of the tool."

Formatting and Source Positions

The Rust guide suggests to put all identifiers into backticks (like in Markdown), Flow formats the error messages using full Markdown.

The Clang, Flow and Rust guides point out the importance of using precise source code spans to point to errors, which is especially important if the compiler information is used in the context of an IDE to show a red squiggly underline or some other highlighting. The spans should be as small as possible to point out the source of the error [Flow].

Conclusion

I am quite impressed how advanced and well-thought out the approaches are. I wonder whether it would makes sense for Python to adopt a (probably minimal, to get started) subset of these ideas as guidelines for its own errors.

Sources

Rust: https://rustc-dev-guide.rust-lang.org/diagnostics.html

Racket: https://cs.brown.edu/~kfisler/Misc/error-msg-guidelines-racket-studlangs.pdf

More about the research that lead to the Racket guidelines (including the referenced papers): https://twitter.com/ShriramKMurthi/status/1451688982761381892

Flow: https://calebmer.com/2019/07/01/writing-good-compiler-error-messages.html

Elm's error message catalog: https://github.com/elm/error-message-catalog

Reason: https://reasonml.github.io/blog/2017/08/25/way-nicer-error-messages.html

PyPy v7.3.7: bugfix release of python 3.7 and 3.8

PyPy v7.3.7: bug-fix release of 3.7, 3.8

We are releasing a PyPy 7.3.7 to fix the recent 7.3.6 release's binary

incompatibility with the previous 7.3.x releases. We mistakenly added fields

to PyFrameObject and PyDateTime_CAPI that broke the promise of binary

compatibility, which means that c-extension wheels compiled for 7.3.5 will not

work with 7.3.6 and via-versa. Please do not use 7.3.6.

We have added a cursory test for binary API breakage to the https://github.com/pypy/binary-testing repo which hopefully will prevent such mistakes in the future.

Additionally, a few smaller bugs were fixed:

Use

uintfor therequestargument offcntl.ioctl(issue 3568)Fix incorrect tracing of while True` body in 3.8 (issue 3577)

Properly close resources when using a

conncurrent.futures.ProcessPool(issue 3317)Fix the value of

LIBDIRin_sysconfigdatain 3.8 (issue 3582)

You can find links to download the v7.3.7 releases here:

We would like to thank our donors for the continued support of the PyPy project. If PyPy is not quite good enough for your needs, we are available for direct consulting work. If PyPy is helping you out, we would love to hear about it and encourage submissions to our blog site via a pull request to https://github.com/pypy/pypy.org

We would also like to thank our contributors and encourage new people to join the project. PyPy has many layers and we need help with all of them: PyPy and RPython documentation improvements, tweaking popular modules to run on PyPy, or general help with making RPython's JIT even better.

If you are a python library maintainer and use C-extensions, please consider making a CFFI / cppyy version of your library that would be performant on PyPy. In any case both cibuildwheel and the multibuild system support building wheels for PyPy.

What is PyPy?

PyPy is a Python interpreter, a drop-in replacement for CPython 2.7, 3.7, and 3.8. It's fast (PyPy and CPython 3.7.4 performance comparison) due to its integrated tracing JIT compiler.

We also welcome developers of other dynamic languages to see what RPython can do for them.

This PyPy release supports:

x86 machines on most common operating systems (Linux 32/64 bits, Mac OS X 64 bits, Windows 64 bits, OpenBSD, FreeBSD)

64-bit ARM machines running Linux.

s390x running Linux

PyPy does support ARM 32 bit and PPC64 processors, but does not release binaries.

PyPy v7.3.6: release of python 2.7, 3.7, and 3.8

PyPy v7.3.6: release of python 2.7, 3.7, and 3.8-beta

The PyPy team is proud to release version 7.3.6 of PyPy, which includes three different interpreters:

PyPy2.7, which is an interpreter supporting the syntax and the features of Python 2.7 including the stdlib for CPython 2.7.18+ (the

+is for backported security updates)PyPy3.7, which is an interpreter supporting the syntax and the features of Python 3.7, including the stdlib for CPython 3.7.12.

PyPy3.8, which is an interpreter supporting the syntax and the features of Python 3.8, including the stdlib for CPython 3.8.12. Since this is our first release of the interpreter, we relate to this as "beta" quality. We welcome testing of this version, if you discover incompatibilites, please report them so we can gain confidence in the version.

The interpreters are based on much the same codebase, thus the multiple release. This is a micro release, all APIs are compatible with the other 7.3 releases. Highlights of the release, since the release of 7.3.5 in May 2021, include:

We have merged a backend for HPy, the better C-API interface. The backend implements HPy version 0.0.3.

Translation of PyPy into a binary, known to be slow, is now about 40% faster. On a modern machine, PyPy3.8 can translate in about 20 minutes.

PyPy Windows 64 is now available on conda-forge, along with nearly 700 commonly used binary packages. This new offering joins the more than 1000 conda packages for PyPy on Linux and macOS. Many thanks to the conda-forge maintainers for pushing this forward over the past 18 months.

Speed improvements were made to

io,sum,_ssland more. These were done in response to user feedback.The 3.8 version of the release contains a beta-quality improvement to the JIT to better support compiling huge Python functions by breaking them up into smaller pieces.

The release of Python3.8 required a concerted effort. We were greatly helped by @isidentical (Batuhan Taskaya) and other new contributors.

The 3.8 package now uses the same layout as CPython, and many of the PyPy-specific changes to

sysconfig,distutils.sysconfig, anddistutils.commands.install.pyhave been removed. Thestdlibnow is located in<base>/lib/pypy3.8onposixsystems, and in<base>/Libon Windows. The include files on windows remain the same. Onposixthey are in<base>/include/pypy3.8. Note we still use thepypyprefix to prevent mixing the files with CPython (which usespython.

We recommend updating. You can find links to download the v7.3.6 releases here:

We would like to thank our donors for the continued support of the PyPy project. If PyPy is not quite good enough for your needs, we are available for direct consulting work. If PyPy is helping you out, we would love to hear about it and encourage submissions to our blog via a pull request to https://github.com/pypy/pypy.org

We would also like to thank our contributors and encourage new people to join the project. PyPy has many layers and we need help with all of them: PyPy and RPython documentation improvements, tweaking popular modules to run on PyPy, or general help with making RPython's JIT even better. Since the previous release, we have accepted contributions from 7 new contributors, thanks for pitching in, and welcome to the project!

If you are a python library maintainer and use C-extensions, please consider making a CFFI / cppyy version of your library that would be performant on PyPy. In any case both cibuildwheel and the multibuild system support building wheels for PyPy.

What is PyPy?

PyPy is a Python interpreter, a drop-in replacement for CPython 2.7, 3.7, and soon 3.8. It's fast (PyPy and CPython 3.7.4 performance comparison) due to its integrated tracing JIT compiler.

We also welcome developers of other dynamic languages to see what RPython can do for them.

This PyPy release supports:

x86 machines on most common operating systems (Linux 32/64 bits, Mac OS X 64 bits, Windows 64 bits, OpenBSD, FreeBSD)

big- and little-endian variants of PPC64 running Linux,

s390x running Linux

64-bit ARM machines running Linux.

PyPy does support Windows 32-bit and ARM 32 bit processors, but does not release binaries. Please reach out to us if you wish to sponsor releases for those platforms.

What else is new?

For more information about the 7.3.6 release, see the full changelog.

Please update, and continue to help us make PyPy better.

Cheers, The PyPy team

Better JIT Support for Auto-Generated Python Code

Performance Cliffs

A common bad property of many different JIT compilers is that of a "performance cliff": A seemingly reasonable code change, leading to massively reduced performance due to hitting some weird property of the JIT compiler that's not easy to understand for the programmer (e.g. here's a blog post about the fix of a performance cliff when running React on V8). Hitting a performance cliff as a programmer can be intensely frustrating and turn people off from using PyPy altogether. Recently we've been working on trying to remove some of PyPy's performance cliffs, and this post describes one such effort.

The problem showed up in an issue where somebody found the performance of their website using Tornado a lot worse than what various benchmarks suggested. It took some careful digging to figure out what caused the problem: The slow performance was caused by the huge functions that the Tornado templating engine creates. These functions lead the JIT to behave in unproductive ways. In this blog post I'll describe why the problem occurs and how we fixed it.

Problem

After quite a bit of debugging we narrowed down the problem to the following reproducer: If you render a big HTML template (example) using the Tornado templating engine, the template rendering is really not any faster than CPython. A small template doesn't show this behavior, and other parts of Tornado seem to perform well. So we looked into how the templating engine works, and it turns out that the templates are compiled into Python functions. This means that a big template can turn into a really enormous Python function (Python version of the example). For some reason really enormous Python functions aren't handled particularly well by the JIT, and in the next section I'll explain some the background that's necessary to understand why this happens.

Trace Limits and Inlining

To understand why the problem occurs, it's necessary to understand how PyPy's trace limit and inlining works. The tracing JIT has a maximum trace length built in, the reason for that is some limitation in the compact encoding of traces in the JIT. Another reason is that we don't want to generate arbitrary large chunks of machine code. Usually, when we hit the trace limit, it is due to inlining. While tracing, the JIT will inline many of the functions called from the outermost one. This is usually good and improves performance greatly, however, inlining can also lead to the trace being too long. If that happens, we will mark a called function as uninlinable. The next time we trace the outer function we won't inline it, leading to a shorter trace, which hopefully fits the trace limit.

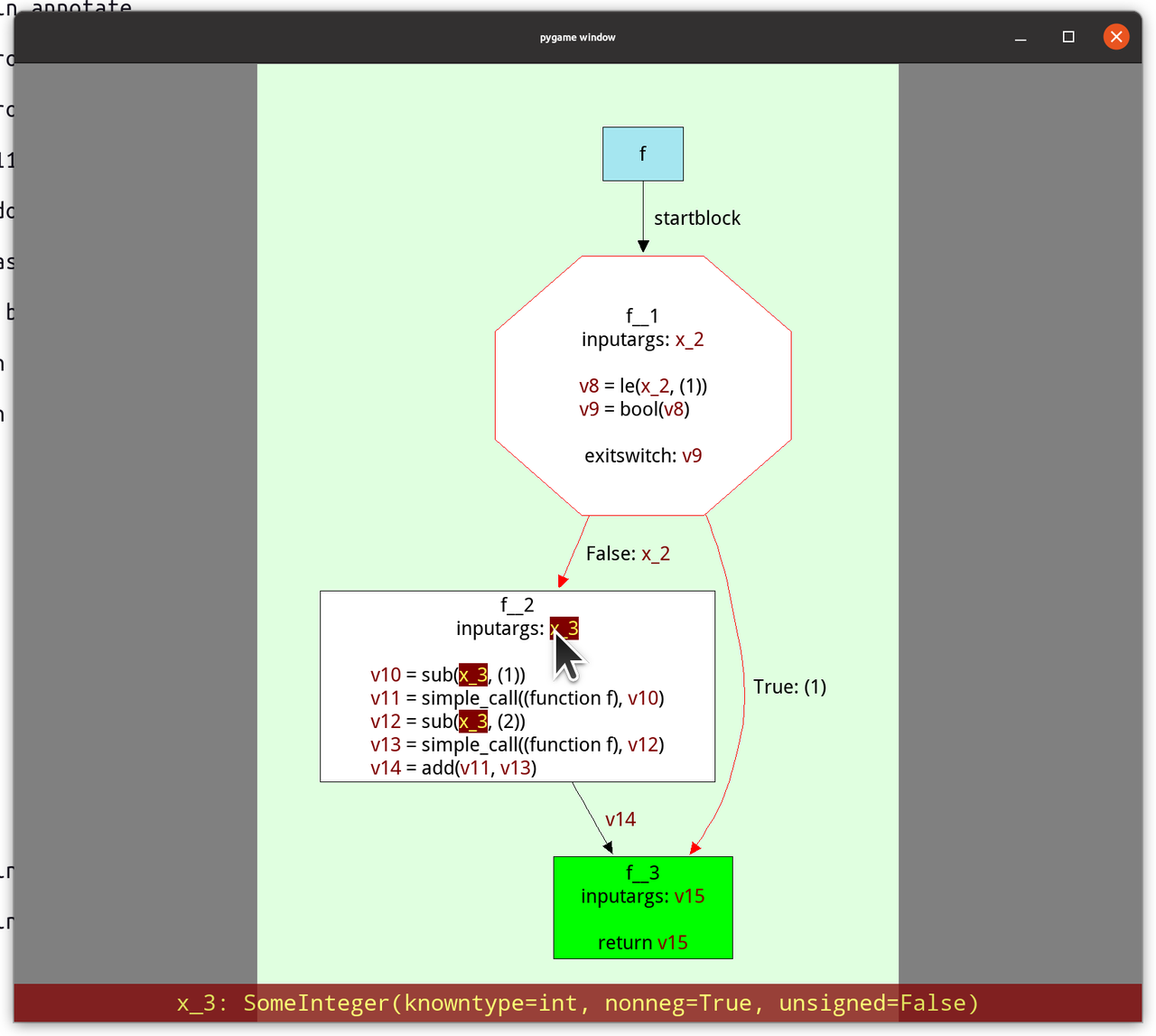

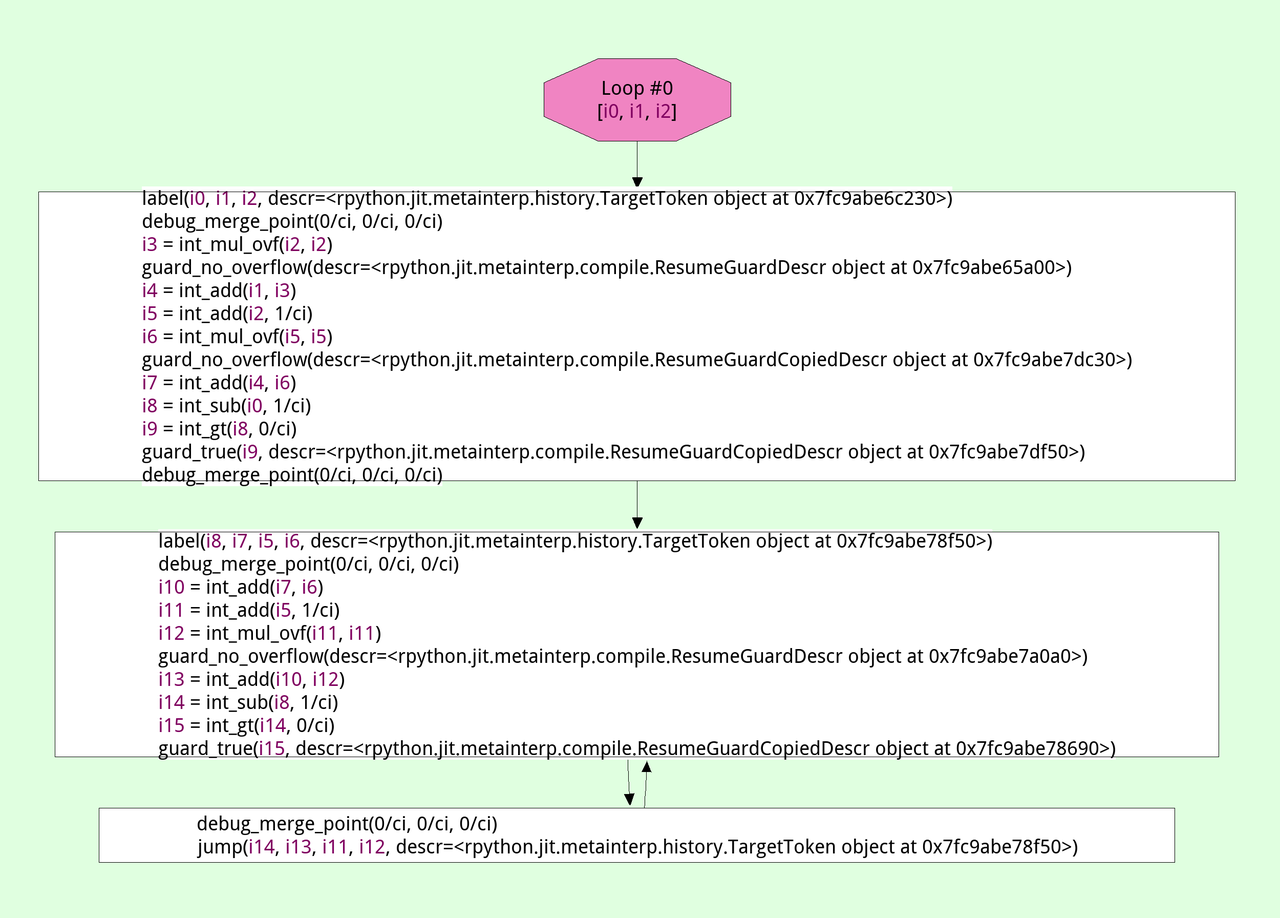

In the diagram above we trace a function f, which calls a function g, which

is inlined into the trace. The trace ends up being too long, so the JIT

disables inlining of g. The next time we try to trace f the trace will

contain a call to g instead of inlining it. The trace ends up being not too

long, so we can turn it into machine code when tracing finishes.

Now we know enough to understand what the problem with automatically generated code is: sometimes, the outermost function itself doesn't fit the trace limit, without any inlining going on at all. This is usually not the case for normal, hand-written Python functions. However, it can happen for automatically generated Python code, such as the code that the Tornado templating engine produces.

So, what happens when the JIT hits such a huge function? The function is traced until the trace is too long. Then the trace limits stops further tracing. Since nothing was inlined, we cannot make the trace shorter the next time by disabling inlining. Therefore, this happens again and again, the next time we trace the function we run into exactly the same problem. The net effect is that the function is even slowed down: we spend time tracing it, then stop tracing and throw the trace away. Therefore, that effort is never useful, so the resulting execution can be slower than not using the JIT at all!

Solution

To get out of the endless cycle of useless retracing we first had the idea of simply disabling all code generation for such huge functions, that produce too long traces even if there is no inlining at all. However, that lead to disappointing performance in the example Tornado program, because important parts of the code remain always interpreted.

Instead, our solution is now as follows: After we have hit the trace limit and no inlining has happened so far, we mark the outermost function as a source of huge traces. The next time we trace such a function, we do so in a special mode. In that mode, hitting the trace limit behaves differently: Instead of stopping the tracer and throwing away the trace produced so far, we will use the unfinished trace to produce machine code. This trace corresponds to the first part of the function, but stops at a basically arbitrary point in the middle of the function.

The question is what should happen when execution reaches the end of this unfinished trace. We want to be able to cover more of the function with machine code and therefore need to extend the trace from that point on. But we don't want to do that too eagerly to prevent lots and lots of machine code being generated. To achieve this behaviour we add a guard to the end of the unfinished trace, which will always fail. This has the right behaviour: a failing guard will transfer control to the interpreter, but if it fails often enough, we can patch it to jump to more machine code, that starts from this position. In that way, we can slowly explore the full gigantic function and add all those parts of the control flow graph that are actually commonly executed at runtime.

In the diagram we are trying to trace a huge function f, which leads to

hitting the trace limit. However, nothing was inlined into the trace, so

disabling inlining won't ensure a successful trace attempt the next time.

Instead, we mark f as "huge". This has the effect that when we trace it again

and are about to hit the trace limit, we end the trace at an arbitrary point by

inserting a guard that always fails.

If this guard failure is executed often enough, we might patch the guard and

add a jump to a further part of the function f. This can continue potentially

several times, until the trace really hits and end points (for example by

closing the loop and jumping back to trace 1, or by returning from f).

Evaluation

Since this is a performance cliff that we didn't observe in any of our benchmarks ourselves, it's pointless to look at the effect that this improvement has on existing benchmarks – there shouldn't and indeed there isn't any.

Instead, we are going to look at a micro-benchmark that came out of the original bug report, one that simply renders a big artificial Tornado template 200 times. The code of the micro-benchmark can be found here.

All benchmarks were run 10 times in new processes. The means and standard deviations of the benchmark runs are:

| Implementation | Time taken (lower is better) |

|---|---|

| CPython 3.9.5 | 14.19 ± 0.35s |

| PyPy3 without JIT | 59.48 ± 5.41s |

| PyPy3 JIT old | 14.47 ± 0.35s |

| PyPy3 JIT new | 4.89 ± 0.10s |

What we can see is that while the old JIT is very helpful for this micro-benchmark, it only brings the performance up to CPython levels, not providing any extra benefit. The new JIT gives an almost 3x speedup.

Another interesting number we can look at is how often the JIT started a trace, and for how many traces we produced actual machine code:

| Implementation | Traces Started | Traces sent to backend | Time spent in JIT |

|---|---|---|---|

| PyPy3 JIT old | 216 | 24 | 0.65s |

| PyPy3 JIT new | 30 | 25 | 0.06s |

Here we can clearly see the problem: The old JIT would try tracing the auto-generated templating code again and again, but would never actually produce any machine code, wasting lots of time in the process. The new JIT still traces a few times uselessly, but then eventually converges and stops emitting machine code for all the paths through the auto-generated Python code.

Related Work

Tim Felgentreff pointed me to the fact that Truffle also has a mechanism to slice huge methods into smaller compilation units (and I am sure other JITs have such mechanisms as well).

Conclusion

In this post we've described a performance cliff in PyPy's JIT, that of really big auto-generated functions which hit the trace limit without inlining, that we still want to generate machine code for. We achieve this by chunking up the trace into several smaller traces, which we compile piece by piece. This is not a super common thing to be happening – otherwise we would have run into and fixed it earlier – but it's still good to have a fix now.

The work described in this post tiny bit experimental still, but we will release it as part of the upcoming 3.8 beta release, to get some more experience with it. Please grab a 3.8 release candidate, try it out and let us know your observations, good and bad!

#pypy IRC moves to Libera.Chat

Following the example of many other FOSS projects, the PyPy team has

decided to move its official #pypy IRC channel from Freenode to

Libera.Chat: irc.libera.chat/pypy

The core devs will no longer be present on the Freenode channel, so we recommend to join the new channel as soon as possible.

wikimedia.org has a nice guide on how to setup your client to migrate from Freenode to Libera.Chat.

PyPy v7.3.5: bugfix release of python 2.7 and 3.7

PyPy v7.3.5: release of 2.7 and 3.7

We are releasing a PyPy 7.3.5 with bugfixes for PyPy 7.3.4, released April 4. PyPy 7.3.4 was the first release that runs on windows 64-bit, so that support is still "beta". We are releasing it in the hopes that we can garner momentum for its continued support, but are already aware of some problems, for instance it errors in the NumPy test suite (issue 3462). Please help out with testing the releae and reporting successes and failures, financially supporting our ongoing work, and helping us find the source of these problems.

The new windows 64-bit builds improperly named c-extension modules with the same extension as the 32-bit build (issue 3443)

Use the windows-specific

PC/pyconfig.hrather than the posix oneFix the return type for

_Py_HashDoublewhich impacts 64-bit windowsA change to the python 3.7

sysconfig.get_config_var('LIBDIR')was wrong, leading to problems finding libpypy3-c.so for embedded PyPy (issue 3442).Instantiate

distutils.command.installschema for PyPy-specificimplementation_lowerDelay thread-checking logic in greenlets until the thread is actually started (continuation of issue 3441)

-

Four upstream (CPython) security patches were applied:

Fix for json-specialized dicts (issue 3460)

Specialize

ByteBuffer.setslicewhich speeds up binary file reading by a factor of 3When assigning the full slice of a list, evaluate the rhs before clearing the list (issue 3440)

On Python2,

PyUnicode_Containsaccepts bytes as well as unicode.Finish fixing

_sqlite3- untested_reset()was missing an argument (issue 3432)Update the packaged sqlite3 to 3.35.5 on windows. While not a bugfix, this seems like an easy win.

We recommend updating. These fixes are the direct result of end-user bug reports, so please continue reporting issues as they crop up.

You can find links to download the v7.3.5 releases here:

We would like to thank our donors for the continued support of the PyPy project. If PyPy is not quite good enough for your needs, we are available for direct consulting work. If PyPy is helping you out, we would love to hear about it and encourage submissions to our renovated blog site via a pull request to https://github.com/pypy/pypy.org

We would also like to thank our contributors and encourage new people to join the project. PyPy has many layers and we need help with all of them: PyPy and RPython documentation improvements, tweaking popular modules to run on PyPy, or general help with making RPython's JIT even better.

If you are a python library maintainer and use C-extensions, please consider making a CFFI / cppyy version of your library that would be performant on PyPy. In any case both cibuildwheel and the multibuild system support building wheels for PyPy.

What is PyPy?

PyPy is a Python interpreter, a drop-in replacement for CPython 2.7, 3.7, and soon 3.8. It's fast (PyPy and CPython 3.7.4 performance comparison) due to its integrated tracing JIT compiler.

We also welcome developers of other dynamic languages to see what RPython can do for them.

This PyPy release supports:

x86 machines on most common operating systems (Linux 32/64 bits, Mac OS X 64 bits, Windows 32/64 bits, OpenBSD, FreeBSD)

big- and little-endian variants of PPC64 running Linux,

s390x running Linux

64-bit ARM machines running Linux.

PyPy does support ARM 32 bit processors, but does not release binaries.

Some Ways that PyPy uses Graphviz

Some way that PyPy uses Graphviz

Somebody wrote this super cool thread on Twitter about using Graphviz to make software visualize its internal state:

🧵 Make yours and everybody else's lives slightly less terrible by having all your programs print out their internal stuff as pictures; ✨ a thread ✨ pic.twitter.com/NjQ42bXN2E

— Kate (@thingskatedid) April 24, 2021

PyPy is using this approach a lot too and I collected a few screenshots of that technique on Twitter and I thought it would make a nice blog post too!

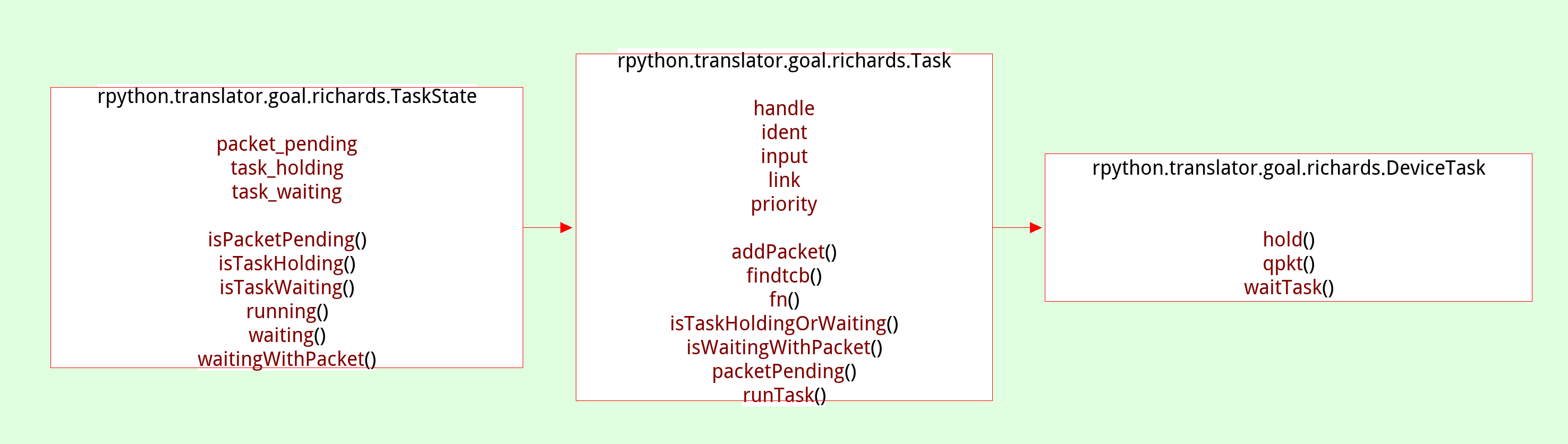

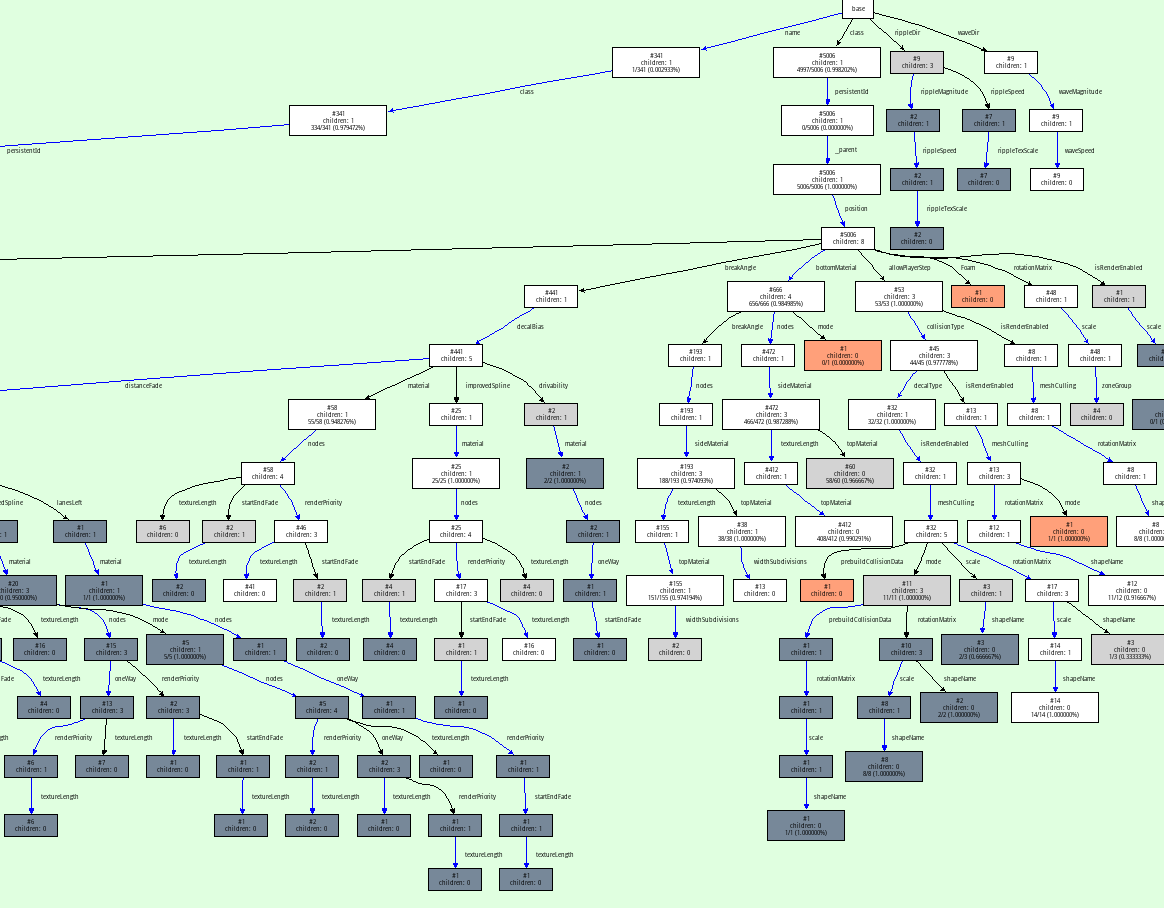

The most important view early in the project, and the way that our Graphviz visualizations got started was that we implemented a way to look at the control flow graphs of our RPython functions after type inference. They are in static single information form (SSI), a variant of SSA form. Hovering over the variables shows the inferred types in the footer:

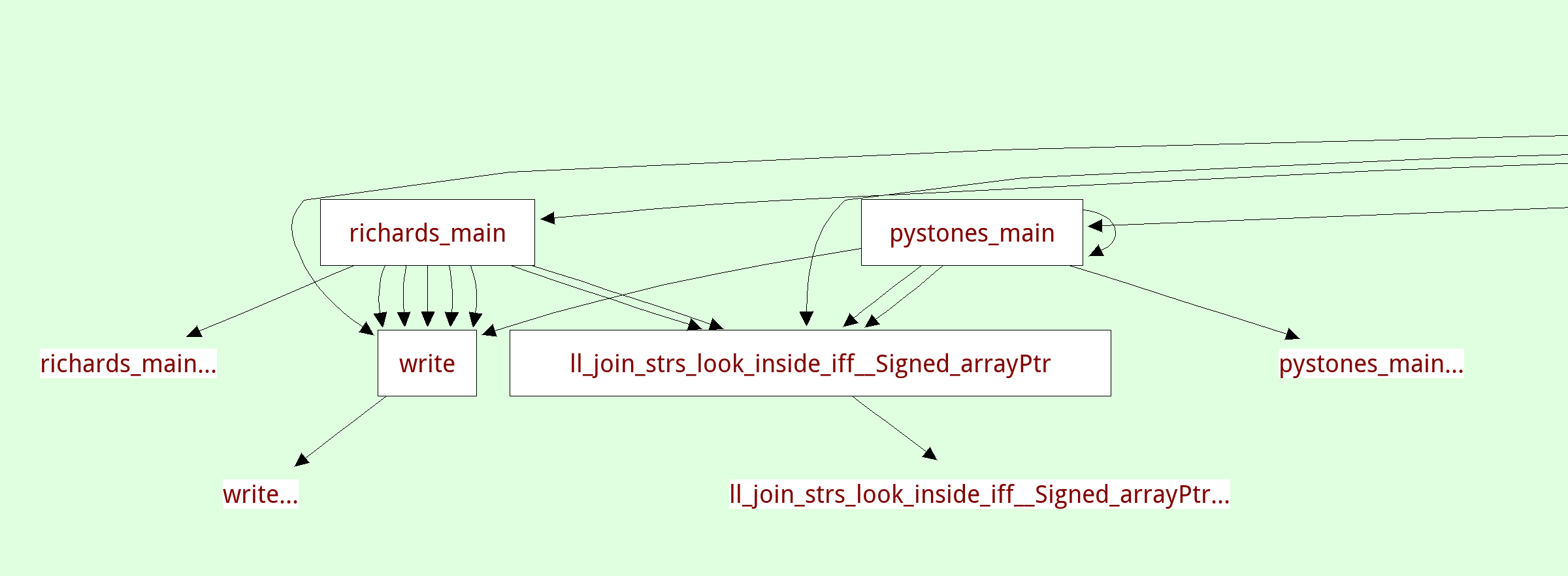

There's another view that shows the inferred call graph of the program:

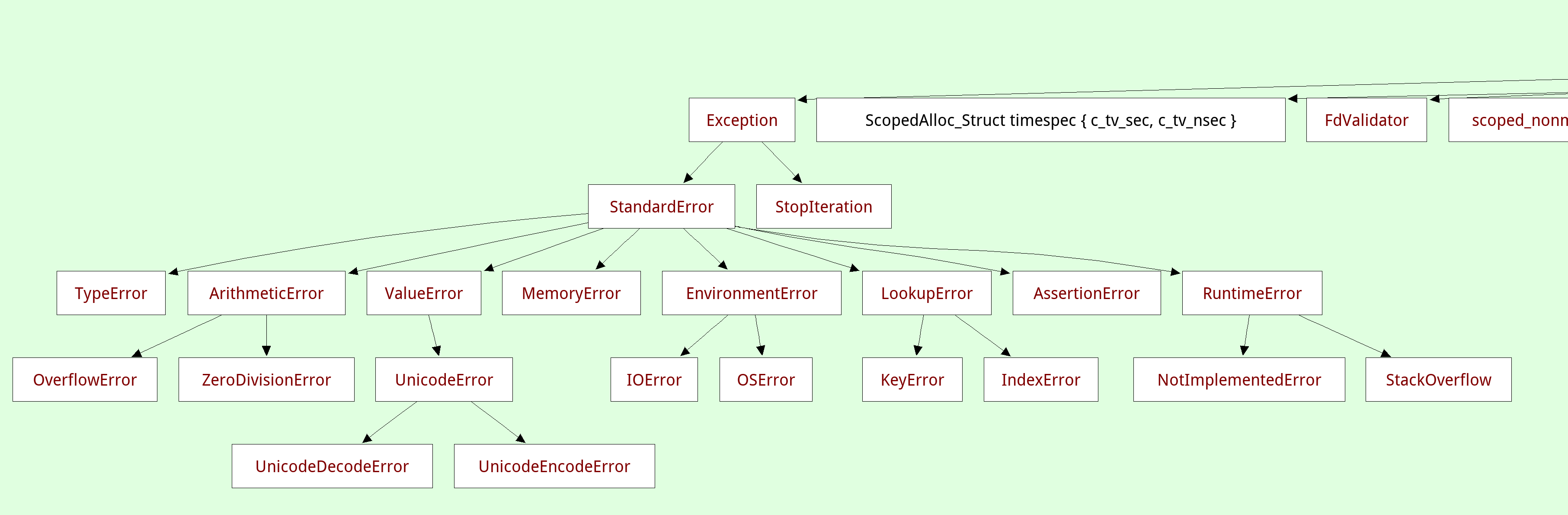

A related viewer shows the inferred class hierarchy (in this case the exception hierarchy) and you can focus on a single class, which will show you its base classes and all the methods and instance attributes that were found:

We also have a view to show us the traces that are produced by the tracing JIT tests. this viewer doesn't really scale to the big traces that the full Python interpreter produces, but it's really useful during testing:

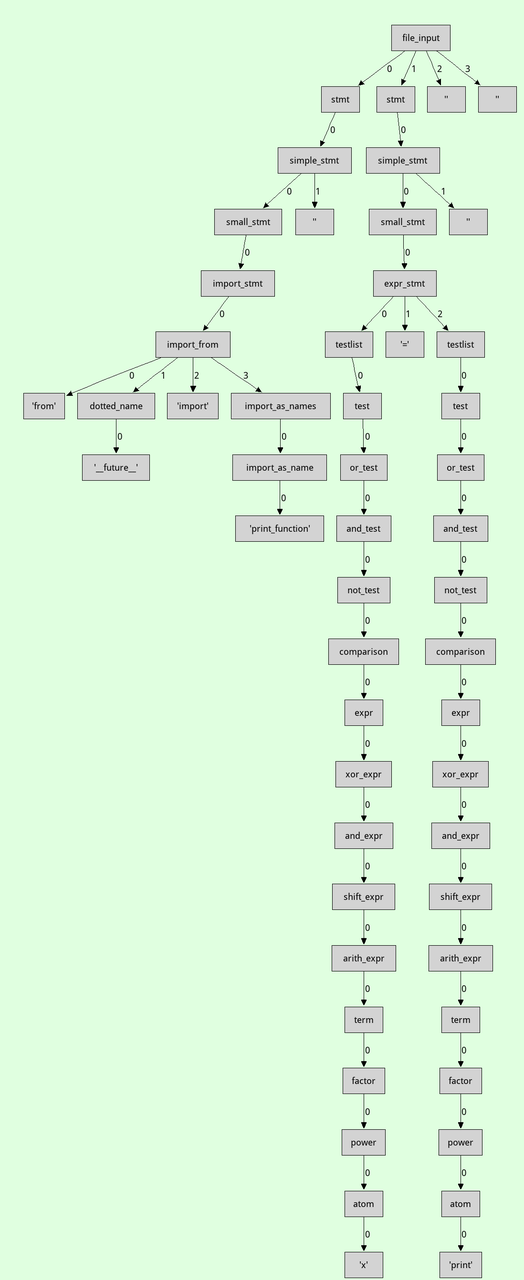

Then there are more traditional tree views, eg here is a parse tree for a small piece of Python source code:

Parsing-related we have visualized the DFAs of the parser in the past, though the code is unfortunately lost.

All these visualizations are made by walking the relevant data structures and producing a Graphviz input file using a bit of string manipulation, which is quite easy to do. Knowing a bit of Graphviz is a really useful skill, it's super easy to make throwaway visualizations.

For example here is a one-off thing I did when debugging our JSON parser to show the properties of the objects used in a huge example json file:

On top of graphviz, we have a custom tool called the dotviewer, which is written in Python and uses Pygame to give you a zoomable, pannable, searchable way to look at huge Graphviz graphs. All the images in this post are screenshots of that tool. In its simplest form it takes any .dot files as input.

Here's a small video dotviewer, moving around and searching in the json graph. By writing a bit of extra Python code the dotviewer can also be extended to add hyperlinks in the graphs to navigate to different views (for example, we did that for the callgraphs above).

All in all this is a really powerful approach to understand the behaviour of some of code, or when debugging complicated problems and we have gotten a huge amount of milage out of this over the years. It can be seen as an instance of moldable development ("a way of programming through which you construct custom tools for each problem"). And it's really easy to get into! The Graphviz language is quite a simple text-based language that can be applied to a huge amount of different visualization situations.

PyPy v7.3.4: release of python 2.7 and 3.7

PyPy v7.3.4: release of python 2.7 and 3.7

The PyPy team is proud to release the version 7.3.4 of PyPy, which includes two different interpreters:

PyPy2.7, which is an interpreter supporting the syntax and the features of Python 2.7 including the stdlib for CPython 2.7.18+ (the

+is for backported security updates)PyPy3.7, which is an interpreter supporting the syntax and the features of Python 3.7, including the stdlib for CPython 3.7.10. We no longer refer to this as beta-quality as the last incompatibilities with CPython (in the

remodule) have been fixed.

We are no longer releasing a Python3.6 version, as we focus on updating to Python 3.8. We have begun streaming the advances towards this goal on Saturday evenings European time on https://www.twitch.tv/pypyproject. If Python3.6 is important to you, please reach out as we could offer sponsored longer term support.

The two interpreters are based on much the same codebase, thus the multiple release. This is a micro release, all APIs are compatible with the other 7.3 releases. Highlights of the release include binary Windows 64 support, faster numerical instance fields, and a preliminary HPy backend.

A new contributor (Ondrej Baranovič - thanks!) took us up on the challenge to get windows 64-bit support. The work has been merged and for the first time we are releasing a 64-bit Windows binary package.

The release contains the biggest change to PyPy's implementation of the

instances of user-defined classes in many years. The optimization was

motivated by the report of performance problems running a numerical particle

emulation. We implemented an optimization that stores int and float

instance fields in an unboxed way, as long as these fields are type-stable

(meaning that the same field always stores the same type, using the principle

of type freezing). This gives significant performance improvements on

numerical pure-Python code, and other code where instances store many integers

or floating point numbers.

There were also a number of optimizations for methods around strings and bytes, following user reported performance problems. If you are unhappy with PyPy's performance on some code of yours, please report an issue!

A major new feature is prelminary support for the Universal mode of HPy: a

new way of writing c-extension modules to totally encapsulate PyObject*.

The goal, as laid out in the HPy documentation and recent HPy blog post,

is to enable a migration path

for c-extension authors who wish their code to be performant on alternative

interpreters like GraalPython (written on top of the Java virtual machine),

RustPython, and PyPy. Thanks to Oracle and IBM for sponsoring work on HPy.

Support for the vmprof statistical profiler has been extended to ARM64 via a built-in backend.

Several issues exposed in the 7.3.3 release were fixed. Many of them came from the great work ongoing to ship PyPy-compatible binary packages in conda-forge. A big shout out to them for taking this on.

Development of PyPy takes place on https://foss.heptapod.net/pypy/pypy. We have seen an increase in the number of drive-by contributors who are able to use gitlab + mercurial to create merge requests.

The CFFI backend has been updated to version 1.14.5 and the cppyy backend to 1.14.2. We recommend using CFFI rather than C-extensions to interact with C, and using cppyy for performant wrapping of C++ code for Python.

As always, we strongly recommend updating to the latest versions. Many fixes are the direct result of end-user bug reports, so please continue reporting issues as they crop up.

You can find links to download the v7.3.4 releases here:

We would like to thank our donors for the continued support of the PyPy project. If PyPy is not quite good enough for your needs, we are available for direct consulting work. If PyPy is helping you out, we would love to hear about it and encourage submissions to our renovated blog site via a pull request to https://github.com/pypy/pypy.org

We would also like to thank our contributors and encourage new people to join the project. PyPy has many layers and we need help with all of them: PyPy and RPython documentation improvements, tweaking popular modules to run on PyPy, or general help with making RPython's JIT even better. Since the previous release, we have accepted contributions from 10 new contributors, thanks for pitching in, and welcome to the project!

If you are a python library maintainer and use C-extensions, please consider making a cffi / cppyy version of your library that would be performant on PyPy. In any case both cibuildwheel and the multibuild system support building wheels for PyPy.

What is PyPy?

PyPy is a Python interpreter, a drop-in replacement for CPython 2.7, 3.7, and soon 3.8. It's fast (PyPy and CPython 3.7.4 performance comparison) due to its integrated tracing JIT compiler.

We also welcome developers of other dynamic languages to see what RPython can do for them.

This PyPy release supports:

x86 machines on most common operating systems (Linux 32/64 bits, Mac OS X 64 bits, Windows 32/64 bits, OpenBSD, FreeBSD)

big- and little-endian variants of PPC64 running Linux,

s390x running Linux

64-bit ARM machines running Linux.

PyPy does support ARM 32 bit processors, but does not release binaries.

What else is new?

For more information about the 7.3.4 release, see the full changelog.

Please update, and continue to help us make PyPy better.

Cheers, The PyPy team

New HPy blog

Regular readers of this blog already know about HPy, a project which aims to develop a new C API for Python to make it easier/faster to support C extensions on alternative Python implementations, including PyPy.

The HPy team just published the first post of HPy new blog, so if you are interested in its development, make sure to check it out!